A Tribute to Paula Rego | Christiana Spens

I had been living in Glasgow for about six months when I went to see the Paula Rego exhibition over in Edinburgh, titled Obedience and

I had been living in Glasgow for about six months when I went to see the Paula Rego exhibition over in Edinburgh, titled Obedience and

Today marks nine years since the publication of Capitalist Realism by Mark Fisher, a concept and work that he would apply and build upon

October marks the Black History Month in the UK, and in its honor we selected an extract from Decolonial Daughter: Letters from a Black Woman

On October 10th thirty years ago, London witnessed one of the most remarkable and intriguing cultural events of its modern history. A concert which used

In this edited extract from 1966 and Not All That, Sanaa Qureshi discusses the relationship between football and nationalism, and whether football can

This is an edited extract from David Stubb’s 1996 & the End of History– available here and currently just £4.50 including UK postage in our half

In this edited extract from Games Without Frontiers, Joe Kennedy analyses the relationship between the World Cup, politics, nationalism and authentocracy. Games Without Frontiers (paperback + free ebook

As the campaign in Ireland to Repeal the 8th reaches its climax, here’s a guide to some movements from the US that are combining feminist

An edited extract from Authentocrats by Joe Kennedy, out on 21st June from Repeater The Nine Yorkshiremen of the Apocalypse On the Friday evening before the June

Next week we’ll be publishing Ryan Alexander Diduck’s Mad Skills: MIDI and Music Technology in the Twentieth Century, a cultural history of MIDI and it’s

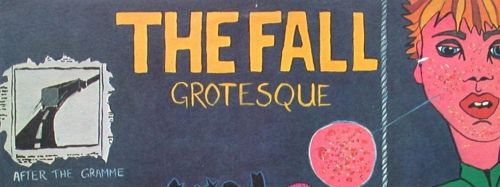

To commemorate the passing of Mark E Smith, below is Mark Fisher’s analysis of The Fall’s Grotesque (After the Gramme), from The Weird and the Eerie (2016).

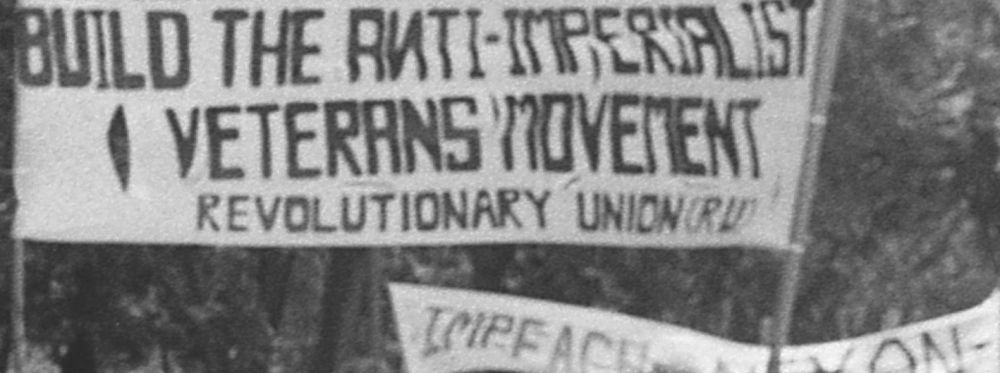

You can now read an extract from Aaron J Leonard and Conor A Gallagher’s A Threat of the First Magnitude – FBI Counterintelligence and Infiltration from the

In November we will be publishing a collection of Mark’s work – K-punk: The Collected Writings of Mark Fisher, edited by Darren Ambrose and with a

11 July 1968 – 13 January 2017 RIP K-PUNK In November we will be publishing a collection of Mark’s work – K-punk: The Collected Writings

We are marking our third year and the holiday season with, quaintly enough, poetry. Here is a selection from our authors and editors. Thank you for

This is an extract from The Neurotic Turn, a new anthology of writing around neuroses edited by Charles Johns, which is out now. In it, Graham Harman

This is part one of an edited extract from 1996 and the End of History by David Stubbs, published last year by Repeater. Part two

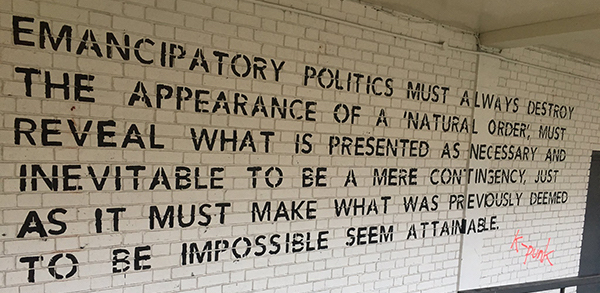

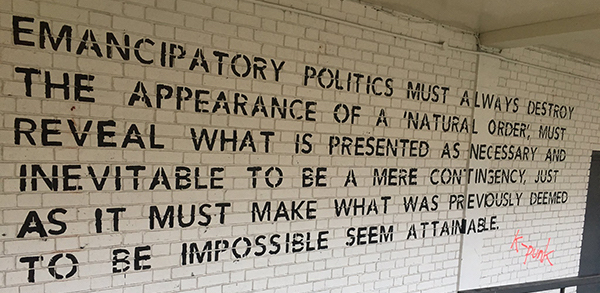

This is an edited extract from Richard Gilman-Opalsky’s Specters of Revolt: On the Intellect of Insurrection and Philosophy From Below (out now). He will be speaking at

Digital Taylorism: Labour Between Passion & Serendipity Attack of the Big Yawn In his fascinating historical study of the rise of happiness to the highly

In the middle of the night the telephone rang. Lior Tirosh picked up the phone and a voice said, “Run.” Tirosh stared blearily at the

This is an edited extract from Johanna Issacsson’s The Ballerina and the Bull: Anarchist Utopias in the Age of Finance (out now). By 1986 punk was

This is an extract from The Living and the Dead by Toby Austin Locke. There is a launch event at The Word bookshop (Goldsmiths) on 4th

In an extract from her recent book Lean Out, Dawn Foster explores the limits of self-proclaimed feminist Theresa May’s solidarity with women. The notorious Yarl’s

Graeme has a place waiting in the recently requisitioned Walpole Bay Hotel and Nick puts him in a USG minivan with a few other recent

Who dares dissent from the gospel according to Silicon Valley? There is – we are insistently told – no alternative to the invasion of capitalist

Backlash “There’s no such thing as the voiceless, only the deliberately silenced and the preferably unheard.”—Arundhati Roy Post-crash, countless studies have shown that the impact

….the shabby houses of La Villette and Bercy, where famous poets spilled wine across their tattered and eternally unfinished manuscripts while dashing to the floor

There was a point about four or five years ago, a point I’m not bothered about confirming archivally but which nonetheless definitely occurred, at which